GSA SER Global Site List

Understanding the GSA SER Global Site List

For anyone running automated link building campaigns, the term GSA SER global site list surfaces quickly as a fundamental resource. It is not merely a static database. It is a curated, continuously evolving collection of target URLs that GSA Search Engine Ranker uses to identify and post to thousands of platforms across the web. Without a properly configured global site list, the software would fire blind, wasting resources on domains that no longer exist or that return poor success rates.

What Exactly Constitutes a Global Site List?

A GSA SER global site list typically contains three distinct categories of URLs, each stored in dedicated text files within the engine’s folder. Users find the identified platforms list, which holds domains where the software previously succeeded in registering or posting. The failed log list records sites where submission attempts broke entirely, often due to deleted platforms or hardened security. Finally, the global blacklist prevents the engine from repeatedly crawling known traps like malware domains, link farms already penalized, or administrative pages. The synergy between these lists is what prevents a campaign from turning into a chaotic spam run.

Why a Fresh Site List Matters for Success

Many newcomers attempt to scrape their own targets from search engine results, but a hand-verified GSA SER global site list offers a massive head start. Pre-vetted lists save months of manual testing. They contain platforms with known registration fields, stable footprints, and correct posting engines. When a list updates regularly, it prunes expired web 2.0 properties, forum installations that have migrated to a new CMS, or comment systems that switched off guest access. This maintenance is the difference between a 5% verified rate and an 85% verified rate during a campaign run.

Anatomy of a High-Quality Site List

Not all lists are created equal. The backbone of any reliable GSA SER global site list is de-duplication. Redundant URLs simply burn threads checking the same domain twice. Precision in footprint matching is equally vital; the engine needs to know whether a target runs WordPress, Drupal, or a custom script so it invokes the correct platform module. The best lists also separate targets by language and do-follow status, giving users granular control. A raw dump of 300,000 URLs will invariably perform worse than a tight, classified collection of 50,000 targets where every entry has a recent verified date attached.

Integrating the List with Your Campaign Workflow

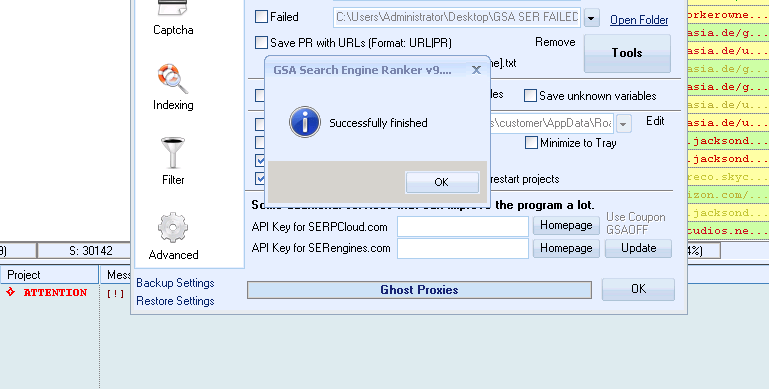

Loading a GSA SER global site list goes beyond a simple import. The engine merges your list with its internal verified database, so placing fresh URLs into the correct folder before launching a project is mandatory. Once loaded, the software starts sending verification attempts using the proxies assigned. Smart users right-click on the main project window and force a retry of the failed list after swapping out blocked proxies, because many sites refuse connections from data center IP ranges by default.

Common Pitfalls and How the List Prevents Them

A neglected GSA SER global site list causes a cascade of problems. The software wastes proxy bandwidth on dead sites. It leaves footprints by posting only to the same huge public networks while ignoring thousands of obscure but powerful niche platforms. Worst of all, an outdated blacklist allows the engine to follow redirect chains into dangerous neighborhoods, potentially infecting the server with malware if automatic downloading is not capped. A well-maintained list keeps the link graph diverse, natural-looking, and safe from algorithmic deindexing penalties.

Automating Submissions with Engine-Specific Targets

When the global list is paired with the right set of engine scripts, the operation becomes nearly here hands-off. The GSA SER global site list marks which engine class—like Article, Social Bookmark, or Wiki—should attempt each URL. An incoming update might add hundreds of fresh Social Network targets complete with the custom registration strings required. Users who pair such a list with branded email catchalls and spun content see a steady stream of verified link placements across IPs and platforms that manual outreach could never reach at scale.

Keeping Your Site List Authoritative

Treating a global site list as a one-time purchase leads to diminishing returns. Platforms change their software stacks daily. A forum that worked yesterday might install a CAPTCHA that standard decaptcha services cannot solve today. The most effective GSA SER global site list providers actively probe their targets with headless browsers, stripping out not only dead domains but also those requiring JavaScript rendering that GSA cannot handle natively. This active verification turns a static text file into a living attack surface map.

Customization Beyond the Defaults

Advanced operators never stop at the default GSA SER global site list. They split their lists by project goals, creating separate verified files for tier-1 and tier-2 campaigns. A tier-1 list might retain only platforms allowing long-form content and high domain authority, while a tier-2 list expands aggressively to comment fields and low-oblique guestbooks. This segmentation stops a single low-quality blast from contaminating money-site relevancy. The global blacklist can also be extended with footprints of sites that cause excessive CPU usage due to infinite redirect loops.

The Role of Community-Shared Intelligence

Private communities often share trimmed versions of the GSA SER global site list built from private blog networks and internal test benches. These lists skip the public scraped junk entirely and focus on domains the community actually controls or knows to be lenient. Merging a community list with a commercial one widens the footprint diversity to a point where no two campaigns leave the same pattern. This diversity, driven by the global site list, remains one of the strongest defenses against footprint-based spam detection algorithms.

Ultimately, the engine is only as smart as the input it receives. A sharp, updated, and well-filtered GSA SER global site list transforms a generic posting tool into a surgical link acquisition machine that performs consistently without human babysitting.